Goldbach's Conjecture and Coding Length

April 2, 2013

Goldbach's conjecture is that every even integer greater or equal to four can be written as the sum of two prime numbers. (Try it: $4 = 2 + 2$, $6 = 3 + 3$, $8 = 3 + 5$, $10 = 3 + 7$, $12 = 5 + 7$...) It occurred to me that if this conjecture were true, it could be used as a way to encode even integers greater than four, and that this encoding would need to be no more efficient than the most efficient encoding, which simply enumerates the even integers $n \ge 4$. If it were more efficient, this would constitute a counterproof of the conjecture, which is widely believed to be true.

The simple way to encode even integers $n \ge 4$ is to take $n \mapsto (n - 4) / 2$, yielding the set $\mathbb{N}_0 = \{0, 1, 2, \ldots\}$ as its image. We can then encode finite subsets of $\mathbb{N}_0$ in the usual way, as unsigned ints with some number $b$ bits. Thus, encoding all even integers from $4$ up to size $N$ would take $$\lceil \log_2((N - 4) / 2 + 1) \rceil = \lceil \log_2((N - 2) / 2) \rceil$$ bits.

If Goldbach's conjecture is true, we can write any even integer $n \ge 4$ as $n = p_1(n) + p_2(n)$, where $p_1(n)$ and $p_2(n)$ are primes. A "Goldbach-encoding" scheme for encoding all even numbers from $4$ up to size $N$ would then be to reserve the first $m_1$ bits to encode the $p_1(n)$, and the second $m_2$ bits to encode the $p_2(n)$. (So an encoded representation of an even integer $n \ge 4$ would be $m_1 + m_2$ bits, and to decode it you just read off the first $m_1$ bits to get $p_1(n)$, read off the latter $m_2$ bits to get $p_2(n)$, and then add them together).

Of course, since $p_1(n)$ and $p_2(n)$ are primes, we can do better than encoding them natively as unsigned ints. Instead, let's encode them based on which number prime they are, with the prime counting function, $\pi$. (So $\pi(2) = 1,\; \pi(3) = 2,\; \pi(5) = 3,\; \pi(7) = 4\ldots$). So our encoding for $p_i(n)$ will be writing out $\pi(p_i(n)) - 1$ as an unsigned int.

Since we want this encoding to be as efficient as possible, we should try to minimize $m_1 + m_2$, the total number of bits required for the Goldbach-encoding. Now, if we are Goldbach-encoding all even integers between $4$ and $N$, we need to pick $m_i$ big enough to encode the largest $p_i(n)$, ie, we need $$m_i = \max_{n \in \{4, \ldots, N\}} \lceil \log_2\left(\pi(p_i(n))\right) \rceil$$ Since the log function is concave, this means that we should minimize $p_1(n)$, or equivalently maximize $p_2(n)$, for each n. That is, we should pick $$p_1(n) = \min \{2 \le p \le n - 2:\; p \text{ is prime and } n - p \text{ is prime}\}$$ and $p_2(n) = n - p_1(n)$.

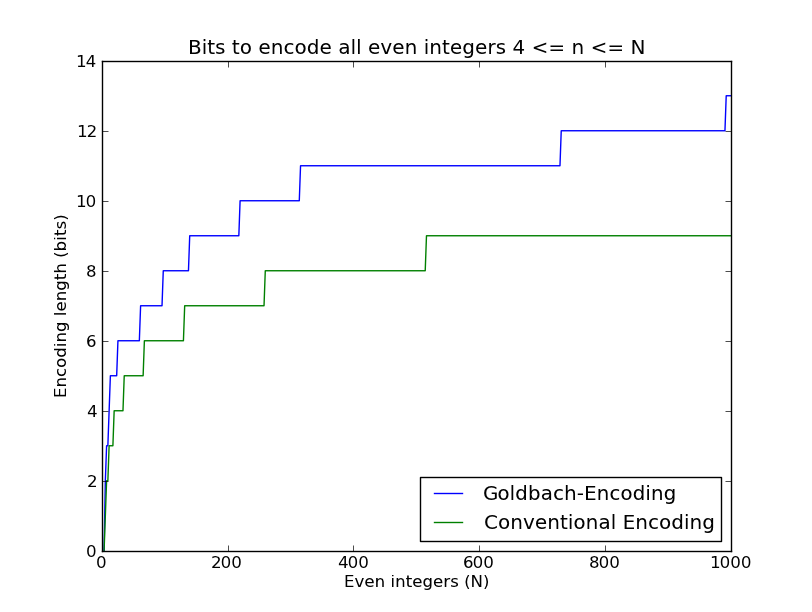

I went ahead and plotted these encoding lengths as a function of N, (source here):

As expected, the Goldbach-encoding length is longer than the conventional encoding length. In fact, it is impossible for this not to have been the case: If the Goldbach conjecture is true, Goldbach-encoding can't be any more efficient than ordinary encoding, and if the Goldbach conjecture is false, there would be some large even integer that I couldn't Goldbach-encode, and so I wouldn't even be able to make these plots for large enough $N$. (If you think the raise ValueError in my code is funny--congratulations, you share my sense of humor!)

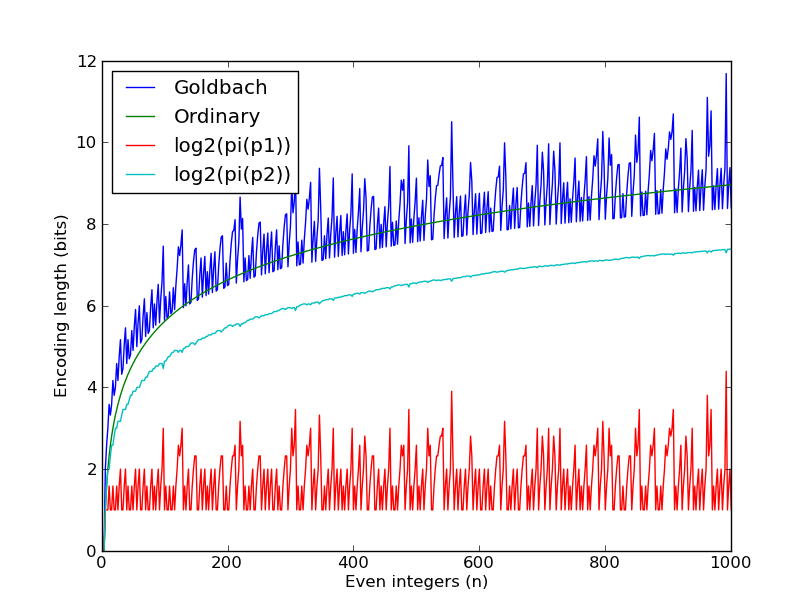

To help understand why the Goldbach-encoding length was substantially longer than the ordinary encoding length, I removed the ceiling functions, (which would probably be negligible anyway for large enough $N$), and simply plotted $\log_2(\pi(p_1(n)))$, $\log_2(\pi(p_2(n)))$, and $\log_2(\pi(p_1(n))) + \log_2(\pi(p_2(n)))$, (the latter of which is labeled "Goldbach" to save space). For comparison, I also plotted $\log_2((n - 2) / 2)$, which is labeled "ordinary".

This plot is a lot more interesting. First of all, it makes clear that the reason why the Goldbach-encoding takes up so much space is that you occasionally get relatively large $p_1(n)$ that you have to encode. You can also see how smooth $\log_2(\pi(p_2(n)))$ is--it looks like it's approximately equal to $\log_2(\pi(n)) \approx \log_2(n/\log(n))$--so the majority of the variation in the coding length for individual even numbers is coming from $p_1$.

And of course, for the Goldbach-denialists out there, it might be encouraging that you often get $$\log_2(\pi(p_1(n))) + \log_2(\pi(p_2(n))) \lt \log_2((n - 2) / 2)$$