You Can't Predict Small Counts

January 17, 2015

A small restaurant is interested in predicting how many customers will come in on a given night. This is valuable information to know ahead of time, for example, so that the restaurant can figure out whether to ask employees to work extra shifts. Unfortunately, under reasonable conditions no amount of data will permit even the most talented data scientist or statistician to make particularly good predictions.

Poisson Model

The reason is that there is too much fundamental variance associated with the nightly counts. As an illustrative approximation, we can model the process as follows: suppose the restaurant is in a city with n people. Each night, every person in the city decides whether or not to come in that night by independently flipping a p-coin. This model leads to a binomial number of people coming in each night: $$ Y = \sum_{i=1}^n X_i, $$ where $Y$ is the total number of people coming in that night and the $X_i$'s are independent Bernoulli(p)'s.

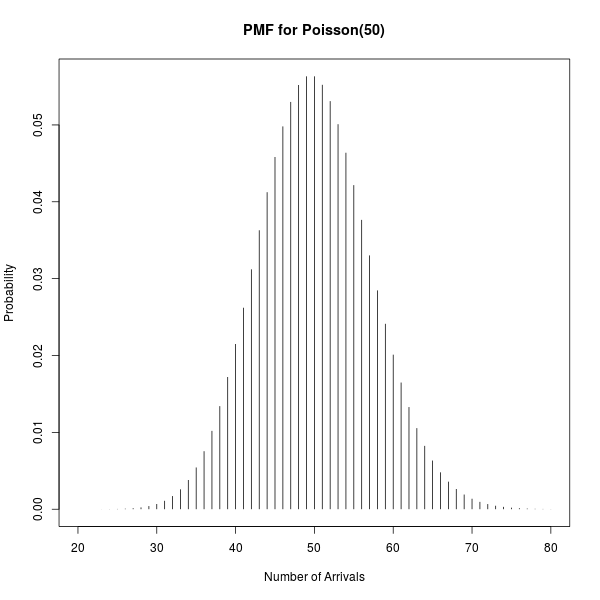

Now, n is probably pretty large, (there are a lot of people in the city), and p is probably pretty small, (any individual is relatively unlikely to attend on a given night), so that this binomial distribution is approximately Poisson. An important feature of the Poisson distribution is that it has equal mean and variance.

So, for example, suppose that on a given day the expected number of people coming in that night is about 50. Then the standard deviation of that count would be about 7 (actually sqrt(50)). Since the Poisson distribution with a large mean is approximately normal, if we go out two standard deviations on both sides we'll get an approximate 95% prediction interval: 36 to 64 people.

In other words, with approximately 95% probability somewhere between 36 and 64 people will show up. That is not a particularly impressive guess.

In practice, of course, we don't know the true expected number of people who'll show up. So we gather a bunch of historical restaurant attendance data and train a state of the art machine learning model to estimate that attendance from the day of week, day of month, day of year, weather that day, attendance the previous days, etc. But in the best case, all this allows us to do is predict the true expected number of customers.

Suppose, for example, that with all that fanciness we predict that the true expected number of people who'll show up is 48.0123, instead of our ballpark guess of 50. Since sqrt(50) is about the same as sqrt(48.0123), the error bars will still be of about the same width, (in fact, slightly less wide). So instead of our old interval, (from 36 to 64), we instead estimate the interval from 34 to 62. For many applications, isn't a very exciting difference.

(There are some applications where it is pretty useful, however: If a sushi restaurant has a policy of buying fish that morning so that they only run out 2.5% of the time, then using machine learning would allow them to buy for 62 people instead of 64 people, saving them a little money. The fundamental variance in the nightly arrivals, however, means that even with the best machine learning the restaurant will still be wasting quite a lot of fish on a typical day).

Overdispersed Poisson

There are a few interesting violations of the above Poisson model. One is that people do not choose whether to come to the restaurant independently. In fact, people often decide in small groups where to eat dinner, and then all go out together.

This leads to what statisticians call "overdispersion", where the variance in the number of people arriving is even higher than it would be under the Poisson distribution. A more reasonable model in this circumstance is that a Poisson number of groups come, and each group has some iid distribution over sizes. Letting $N$ be Poisson, and $Z_i$ be the size of the ith group, this model is $$ Y = \sum_{i=1}^N Z_i $$ (In the special case where $Z_i = 1$, then $Y = N$ and this is the Poisson model above).

It's not too hard to derive that the expected number of customers is the mean number of groups times the mean group size, and that the variance in the number of customers is the mean number of groups times the expected squared group size. That is, $$ E\left[Y\right] = E\left[N\right] E\left[Z_1\right] $$ and $$ Var\left[Y\right] = E\left[N\right] E\left[Z_1^2\right]. $$

This leads to significantly higher variance than in the above naive model. Instead of the variance equaling the expectation, it's higher by a factor of $$ \frac{E\left[Z_1^2\right]}{E\left[Z_1\right]}. $$

For example, suppose that each night an average of 25 groups come in. Suppose that a third of the groups have one person, a third have two people, and a third have three people. Then the average group has two people in it, so that an average of 50 (=25*2) people come in every night. The expected squared group size, however, is 14/3 = 4.67. This makes the standard deviation of the number of people who come in each night 10.8 (=sqrt(4.67*25)), substantially greater than 7.1, the standard deviation we'd have if each group had just one person.

The approximate 95% prediction interval then widens to anywhere from 28 to 72 people, making the prediction even less exciting. And this window will be even wider if you have a decent fraction of groups larger than 3 people. No matter how good your machine learning is, under this reasonable model you won't be able to make predictions with a mean square error of better than 10.8 people.

Individual-Level Predictions

Another interesting violation of the Poisson model is in the assumption that p is the same and small for all individuals or groups in the city. This assumption is clearly violated, for example, if the restaurant takes reservations. In that case, there are some people in the city whose probability of coming that night is near to 1. Unlike in the overdispersed Poisson model above, this violation has the effect of lowering the variance relative to the naive Poisson model, rather than increasing it.

The models above also implicitly assume temporal independence; that each night people make decisions to come independent of their previous decisions. However, if the restaurant has a population of "regulars" who come in most Friday nights, for example, then this assumption is violated and the variance in arrivals will be lower on Fridays than it otherwise would be.

More generally, you can prove the following theorem: Suppose $$ Y = \sum_{i=1}^n Z_i X_i, $$ where $n$ is the total number of potential groups, $Z_i$ (the size of the ith group) are iid, $X_i$ (whether the group comes) is a Bernoulli with success parameter $p_i$, and all the $Z_i$ and $X_i$ are independent. Then among all choices of $p_i$ with $E\left[Y\right] = \mu$, the choice of the $p_i$ that maximizes $Var\left[Y\right]$ has all $p_i = p$.

This is a long-winded way of saying that if some particular groups are more likely to come than others, the variance in the number of arrivals will be lower than it otherwise would be. If you have data that allows you to estimate which particular groups are going to come, you'll be able to do better than the "Poisson bound".

Fundamental Variance

Regardless of the true model, the thing to keep in mind is that for count data the fundamental variance associated with the counts can be quite high. This is particularly true if the counts are small or if you have overdispersion. If this is the case, it may be impossible for you to make good predictions, no matter smart you are or how many observations you collect.